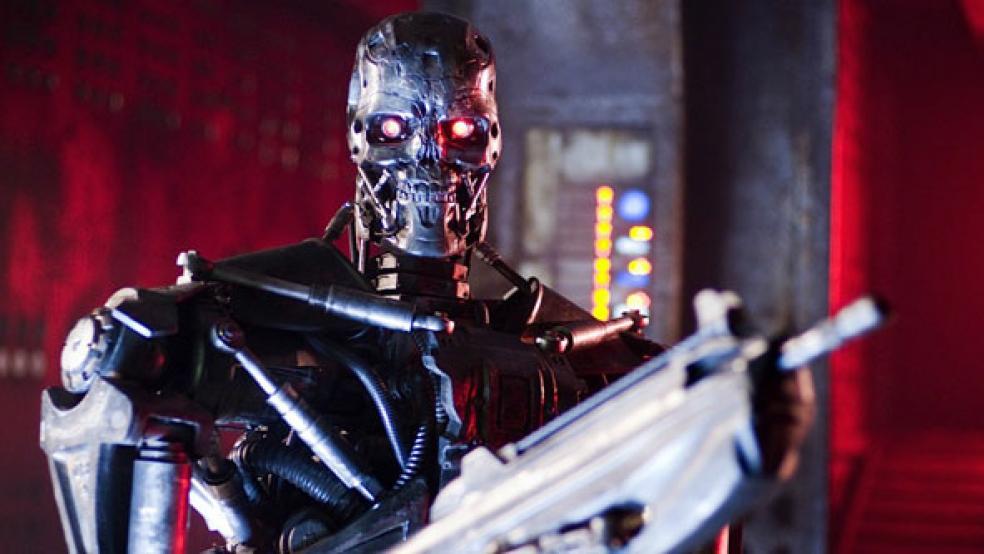

Are Terminators with American flags on their sleeves bound for combat anytime soon?

In an interview published today by Breaking Defense, Defense Secretary Ash Carter said that the U.S. would never unleash autonomous killing machines. But the military’s embrace of artificial intelligence is getting stronger, and Carter won’t be overseeing the Pentagon forever.

Related: Who Can Be Killed by a Drone? US Reveals the Rules of Engagement

A Department of Defense directive mandating “appropriate levels of human judgment” over autonomous weapons, which was signed by Carter in 2012 when he was Deputy Defense Secretary, is set to expire next year. As Wired pointed out last week, either Hillary Clinton or Donald Trump will decide whether to unleash squads of robots outside of direct human control in combat.

This morning, on a trip back from Silicon Valley, where Carter had been continuing his aggressive outreach to tech companies, the Defense Secretary said that the use of force would always be under human control.

However, the debate over killer robots has begun, and it is far from over.

In July 2015, in an open letter on autonomous weapons signed by Elon Musk, Stephen Hawkings and Steve Wozniak, AI and robotics researchers said that artificial intelligence has advanced far enough that deployment of “weapons [that] select and engage targets without human intervention…[such as] armed quadcopters that can search for and eliminate people meeting certain pre-defined criteria… are feasible within years, not decades, and the stakes are high: autonomous weapons have been described as the third revolution in warfare, after gunpowder and nuclear arms.”

Related: More Killer Drones, Please: The Air Force Places a $371 Million Order

One upside of replacing human soldiers with autonomous weapons is that casualties could be reduced, but the scientists signing the open letter worried that AI systems would lower the threshold for going to war.

“The key question for humanity today is whether to start a global AI arms race or to prevent it from starting,” they wrote. “If any major military power pushes ahead with AI weapon development, a global arms race is virtually inevitable, and the endpoint of this technological trajectory is obvious: autonomous weapons will become the Kalashnikovs of tomorrow. Unlike nuclear weapons, they require no costly or hard-to-obtain raw materials, so they will become ubiquitous and cheap for all significant military powers to mass-produce.

“It will only be a matter of time until they appear on the black market and in the hands of terrorists, dictators wishing to better control their populace, warlords wishing to perpetrate ethnic cleansing, etc. Autonomous weapons are ideal for tasks such as assassinations, destabilizing nations, subduing populations and selectively killing a particular ethnic group. We therefore believe that a military AI arms race would not be beneficial for humanity. There are many ways in which AI can make battlefields safer for humans, especially civilians, without creating new tools for killing people.”

Related: Move Over, Drones: Here Come the Remote-Controlled Big Guns

One counter argument was in an article last month in War on the Rocks by three military thinkers. They wrote that while Western governments wrangle “over the precise wording of their self-imposed and self-important legal and ethical constraints on autonomous weapons” American troops will be “at risk of suffering a decisive military disadvantage.”

“We should temper our concerns about ‘killer robots’ with the knowledge that our adversaries care about the morals and ethics of lethal, autonomous systems only insofar as those concerns give them a competitive advantage,” wrote retired Army Col. Joseph Brecher, Army Col. Heath Niemi and Army War College Professor Andrew Hill. “Paradoxically, it is the knowledge that the United States has the capability and capacity to wage war using fully autonomous, lethal robots that is most likely to persuade America’s potential adversaries to refrain from using or making escalating investments in these systems.”